Combinatorial matrix theory

AMITA

I would prefer they stay focused on

Combinatorial Matrix Theory.

Combinatorial matrix theory is a rich branch of mathematics that combines combinatorics (the enumeration, combination, and permutation of sets of elements and the mathematical relations that characterize their properties), graph theory (the mathematical study of the properties of the formal mathematical structures consisting of vertices and edges called graphs; discussed in the math notes for Episode 401, "Trust Metric"), and linear algebra (the study of linear sets of equations and their transformation properties).

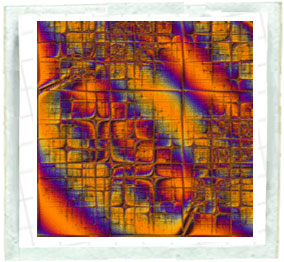

The animation at the top of this page shows the discrete Fourier transform of the

n x n matrix with matrix elements

given by ![]() , where

, where ![]() is the divisor function of order 4 (i.e., the sum

of divisors to the fourth power) generalized to complex numbers (in this case, the Gaussian integers). Since the Fourier

transform of a complex number is itself complex, the animation shows only the complex argument (also called the complex modulus), which is simply given by

is the divisor function of order 4 (i.e., the sum

of divisors to the fourth power) generalized to complex numbers (in this case, the Gaussian integers). Since the Fourier

transform of a complex number is itself complex, the animation shows only the complex argument (also called the complex modulus), which is simply given by

![]() . As the size n of the matrix increases, more and more beautiful structure is

revealed. The structure corresponds to periodicities in the divisor function as well as the relative strengths of

periodic components. The application of Fourier transforms to find periodicities in data is an extremely powerful tool

that is very commonly used in signal- and image-processing applications.

. As the size n of the matrix increases, more and more beautiful structure is

revealed. The structure corresponds to periodicities in the divisor function as well as the relative strengths of

periodic components. The application of Fourier transforms to find periodicities in data is an extremely powerful tool

that is very commonly used in signal- and image-processing applications.

The book pictured above, by Richard Brualdi and Herbert Ryser, is a classic text on combinatorial matrix theory. This book investigates in detail the theory of matrices with prescribed combinatorial properties, including mathematical objects called permanents and Latin squares (which are important in experimental design). The book ends by applying combinatorial arguments to prove classical algebraic theorems, including the so-called Cayley-Hamilton theorem (which states that an n×n matrix A is annihilated by its characteristic polynomial). The graph shown on the cover is known as the Shrikhande graph.

In order to understand the significance of the Shrikhande graph, we must first discuss what it means for a graph to be regular. A regular graph of degree r is a graph all of whose vertices have the same number of edges incident on them. Regular graphs therefore have a high degree of symmetry, and include a number of particularly beautiful examples, such as those illustrated above.

There is a class of graphs known as strongly regular graphs that are even more symmetric than regular graphs. In a strongly regular

graph, not only does each vertex have the same number of neighbors (usually denoted k), but every adjacent pair

of vertices has the same number (usually denoted ![]() ) of common neighbors, and every nonadjacent pair of vertices has the

same number (usually denoted

) of common neighbors, and every nonadjacent pair of vertices has the

same number (usually denoted ![]() ) of common neighbors. A strongly regular graph on

) of common neighbors. A strongly regular graph on ![]() vertices is therefore said to have parameters

vertices is therefore said to have parameters ![]() . Strongly regular graphs are very special mathematical objects that have connections to

other areas of mathematics, including group

theory and statistics. One class of

strongly regular graphs is the so-called lattice graphs Ln,

whose vertices can be thought of as squares on an n×n chessboard, and whose edges can be thought of as

pairs of squares that are connected by a possible move by a rook chess piece. It turns out that the lattice graphs are

strongly regular, in particular L4, which is

strongly regular with parameters (16, 6, 2, 2). Shrikhande established that there exists exactly one other strongly

regular graph with those parameters, and that graph, illustrated below in another embedding, now bears his name.

. Strongly regular graphs are very special mathematical objects that have connections to

other areas of mathematics, including group

theory and statistics. One class of

strongly regular graphs is the so-called lattice graphs Ln,

whose vertices can be thought of as squares on an n×n chessboard, and whose edges can be thought of as

pairs of squares that are connected by a possible move by a rook chess piece. It turns out that the lattice graphs are

strongly regular, in particular L4, which is

strongly regular with parameters (16, 6, 2, 2). Shrikhande established that there exists exactly one other strongly

regular graph with those parameters, and that graph, illustrated below in another embedding, now bears his name.

Alternate reality games

MEGAN

Weyburn evidently came here to play

something called an Alternate

Reality Game-- Privacy?

They head into the building--

AMITA

Primacy.

MEGAN

You know it?

AMITA

I play it.

An alternate reality game (ARG) projects a fictional story onto the real world. To progress through the story in an alternate reality game, various challenges must be overcome or problems must be solved. The following list summarizes a number of well-known alternate reality games:

In the recent alternate reality game "Why So Serious," which supports the upcoming Batman film The Dark Knight, Batman's nemesis, The Joker, is collecting minions for his nefarious purposes. Toward this end, various puzzles and scavenger hunts are provided to his "clowns" to test their worthiness. The cluses for this game are distributed in actual locations across the United States, and dozens of teams must cooperate in order to locate and solve them.

Evolutionary algorithms

CHARLIE

I could adapt an evolutionary

algorithm to search the game

history for abnormally aggressive

activity.

My algorithm would recognize this

typical activity and ignore it,

seeking out abnormal actions.

Think of a pond--

AUDIENCE VISION

An idyllic pond-- plants, water, insects, birds, frogs, etc.

All look healthy and in balance. A bird eats an insect.

CHARLIE (V.O.) (cont'd)

A pond is a healthy ecosystem where

predators and prey are in balance--

however, if an outside predator is

introduced--

A bird is snatched by something unseen. As more birds

disappear, insects go unchecked and devour the plants.

CHARLIE (V.O.) (cont'd)

The ecosystem changes. An

evolutionary algorithm highlights

these abnormal alterations--

In general, the field of evolutionary computation describes the use of iterative stochastic optimization algorithms. Such processes are often inspired by biological mechanisms of evolution, and particular approaches to evolutionary computation go under names such as evolutionary programming, evolution strategies, genetic algorithms, and genetic programming. The term stochastic optimization refers to the minimization (or maximization) of a function in the presence of randomness in the optimization process. The basic idea is to try to mimic a simple picture of natural selection in order to find a good algorithm. Common methods of stochastic optimization include direct search methods (such as the Nelder-Mead method), stochastic approximation, stochastic programming, and miscellaneous methods such as simulated annealing and genetic algorithms. Alert readers will recall that we have already encountered and applied the evolutionary computation method known as differential evolution to the optimal placement of police units in our write-up for Episode 401 "Trust Metric."

There are a many different types of evolutionary computation and genetic algorithms. In particular, the generic algorithm approach, developed by John Holland in 1975, created an electronic organism in the form of a binary string (chromosome), and then used genetic and evolutionary principles of fitness-proportionate selection for reproduction (including random crossover and mutation) to search enormous solution spaces efficiently. So-called genetic programming languages apply the same principles, using an expression tree instead of a bit string as the "chromosome."

The Higgs boson

LARRY

Well, I have received a rather tantalizing

offer to join the D Zero team searching

for the Higgs boson.

CHARLIE

Well all right. The God Particle. Which

would be a continuation of your spiritual

seeking as well as your quest for a

Unified Theory?

LARRY

Faith in science is still faith, is

it not?

AMITA

Now that's very cool, Larry, I think you

should continue with your search for

supersymmetric particles.

LARRY

You know, smashing protons at 99.99 %

of the speed of light-- all to locate

a single fragment which would move

us one step closer to unifying all

physics, explaining how the Old

One created the universe-- what

could be more spiritual?

There are four fundamental forces in the universe: gravitational, electromagnetic, weak, and nuclear. For more than the last hundred years, physicists have sought to unite the description of these four forces. Einstein himself devoted a substantial part of his life to this goal. Not long after the rise of quantum mechanics, quantum field theory was born. It was applied to microscopic phenomena that involve electrons and photons. The predictions of this theory (quantum electrodynamics) agree with experimentally measured values to astonishing precision. The inclusion of the weak and the nuclear force in quantum field theory resulted in a framework known as the standard model--a beautiful theoretical structure that unites three of the known forces of the world. The creation and developments of the standard model took physicists decades, and resulted in the disbursement of a number of Nobel Prizes along the way.

While many aspects of the standard model have been experimentally confirmed to good precision, one of the main unconfirmed aspects of the theory is the existence (and the properties) of the so-called Higgs boson. This particle is a very important theoretical ingredient of the theory, so finding it in nature is necessary to ensure the full correctness of the standard model. Despite substantial international efforts, the Higgs particle has not been found in accelerator experiments. The D0 experiments currently conducted at the Fermi National Accelerator Laboratory (Fermilab) in Batavia, Illinois, possibly have the capacity to detect indications of the Higgs boson. The LHC (large hadron collider) at CERN, which will begin experiments in mid-2008, will conduct various experiments to find the Higgs particle and measure its properties. Therefore, within the framework of well-established scientific theories, the Higgs particle remains one of the most important--but also the most elusive--components.

Trivial or deep?

LARRY

You know, smashing protons at 99.99 %

of the speed of light-- all to locate

a single fragment which would move

us one step closer to unifying all

physics, explaining how the Old

One created the universe-- what

could be more spiritual?

CHARLIE

Not a trivial activity.

To a mathematician, the word trivial describes a result or theorem that is mathematically obvious (for example, is true by definition). More generally, trivial is used to describe any result that requires little or no effort to derive or prove. The word originates from the Latin trivium, which was the lower division of the seven liberal arts in medieval universities (cf. quadrivium).

Examples of trivial mathematical structures include trivial basis, trivial bundle, trivial group, trivial loop, trivial module, trivial representation, trivial ring, and trivial topology.

According to the Nobel-Prize-winning physicist Richard Feynman, mathematicians designate any theorem as trivial once a proof has been obtained--no matter how difficult the theorem was to prove in the first place. There are therefore exactly two types of true mathematical propositions: trivial ones, and those that have not yet been proven.

The opposite of a trivial theorem is a deep theorem. Qualitatively, a deep theorem is a theorem whose proof is long, complicated, difficult, or appears to involve branches of mathematics that are not obviously related to the theorem itself. The quadratic reciprocity theorem in number theory is sometimes cited as an example of a deep theorem.

References

Brualdi, R. and Ryser, H. J. Combinatorial Matrix Theory. New York: Cambridge University Press, 1991.

CERN. "LCH - The Large Hadron Collider." http://lhc.web.cern.ch/lhc/

Fermi National Accelerator Laboratory. "The D0 Experiment." http://www-d0.fnal.gov/

Feynman, R. P. and Leighton, R. "A Different Set of Tools." In 'Surely You're Joking, Mr. Feynman!': Adventures of a Curious Character. New York: W. W. Norton, pp. 69-72, 1997.

Nobel Foundation. "The Nobel Prize in Physics 2004." http://nobelprize.org/nobel_prizes/physics/laureates/2004/public.html

Shanks, D. "Is the Quadratic Reciprocity Law a Deep Theorem?" §2.25 in Solved and Unsolved Problems in Number Theory, 4th ed. New York: Chelsea, pp. 64-66, 1993.

Shrikhande, S.-C. S. "The Uniqueness of the L2 Association Scheme." Ann. Math. Stat. 30, 781-798, 1959.