Floyd-Warshall algorithm

As Colby lays a MAP out on Charlie's desk. Los Angeles, with

eleven points flagged... Charlie and Larry examining it --

COLBY

It's a pretty basic GPS -- no data on

the times or the routes --

CHARLIE

-- just the ten most recent

destinations he typed in.

COLBY

Gives us eleven points, including the

meth lab. I see a map with a bunch of

dots on it -- I figure you can tell

me something -- maybe even find his

stash house --

CHARLIE

It's not really enough data for any

kind of inference... maybe some

application of Floyd-Warshall --

The Floyd-Warshall algorithm is a procedure for efficiently and simultaneously finding the shortest path (i.e., the graph geodesic) between every pair of vertices in a weighted and potentially directed graph. (For more mathematical background on graphs and graph theory, see the Math Notes for Episode 401, "Trust Metric.")

While the Floyd-Warshall algorithm is asymptotically as fast as applying Dijkstra's algorithm (used by Charlie in the Season 3 episode "Money for Nothing" to find the most likely escape routes out of Los Angeles) repeatedly for each pair of vertices, in practice the Floyd-Warshall algorithm is much faster because of its conciseness and simplicity. In other words, it is a very efficient way of solving the so-called all-pairs shortest path problem.

For example, in the four-vertex directed weighted graph illustrated above (where directed edges are indicated by arrows, edge weights by black numbers next to the corresponding arrowhead, and vertex numbers as red labals next to the corresponding vertex), applying the Floyd-Warshall algorithm gives the graph distance matrix shown at right (where the shortest distance between vertex i and j is encoded as the (i, j)th matrix element). So, for example, the shortest distance between vertex 1 and vertex 3 is 3 + 5 = 8, which is the third entry in the first row. Similarly, the shortest distance between vertices 3 and 2 is 7 + 5 + 3 = 15, which is the second entry in the third row, and so on.

Because the Floyd-Warshall algorithm is essentially equivalent to the so-called transitive closure algorithm independently discovered by Bernard Roy in 1959 and Stephen Warshall in 1962, the method is commonly attributed to all three of these authors.

Brute force in mathematics

LARRY

Wouldn't the process of comparing all

potential routes between each pair of

points require a lot of brute force?

COLBY

There's an expression I don't

normally associate with... well...

For discrete problems in which no efficient solution-method is known, it might be necessary to test each possibility sequentially in order to determine if it provides a solution. Such examination of all possibilities is known variously as exhaustive search, direct search, or the "brute force" method. Exhaustive searching is effective only on problems that are small enough so that the search is completed in reasonable time. Of course, faster computers or distributed computation efforts (such as mersenne.org's search for Mersenne primes and Seventeen Or Bust's search for Sierpiński numbers of the second kind) can push the boundaries of what can be searched. However, finding an efficient algorithm is almost always preferable and can render previously intractable problems quite solvable.

An example of a brute force algorithm is direct search factorization. This method consists of searching for prime factors of a number by systematically performing trial divisions, usually using a sequence of increasing numbers. Direct search factorization is very inefficient, and can be used only with fairly small numbers. Many other more efficient algorithms have been devised for determining the prime factors of a given number, but it remains very difficult to build a general-purpose algorithm for this computationally "hard" problem.

Complexity theory is the theory of classifying problems based on how difficult they are to solve. A problem is assigned to the P-problem (polynomial-time) class if the number of steps needed to solve it is bounded by some power of the problem's size. A problem is assigned to the NP-problem (nondeterministic polynomial-time) class if it permits a nondeterministic solution and the number of steps to verify the solution is bounded by some power of the problem's size. The class of P-problems is a subset of the class of NP-problems, but there also exist problems that are not NP.

If a solution is known to an NP-problem, it can be reduced to a single polynomial-time verification. A problem is said to be NP-complete if it is NP and an algorithm for solving it can be translated into an algorithm that can solve any other NP-problem. Examples of NP-complete problems include the Hamiltonian circuit and traveling salesman problems. Linear programming, thought to be an NP-problem, was shown to actually be a P-problem by Leonid Khachian in 1979. It is not known if all apparently NP-problems are actually P-problems.

Therefore, unless it turns out that NP-problems are equivalent to P-problems (which seems unlikely, but has not yet been proved), NP-problems can only be solved by exhaustive search in the worst case.

Dirichlet tessellations

CHARLIE

We know the order that the locations

were arrived at -- what about doing a

time series analysis of overlapping

Dirichlet Tessellations?

Larry considers this for a beat -- then --

LARRY

Wow.

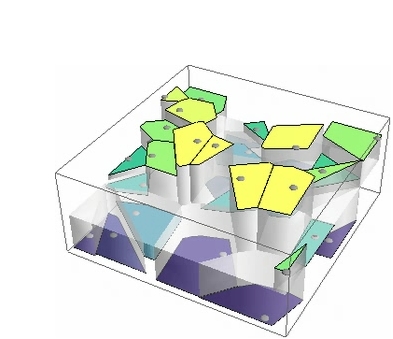

A Dirichlet tessellation, more commonly called a Voronoi diagram, is a partitioning of a plane containing a set of points into convex polygons such that each polygon contains exactly one generating point and every point in a given polygon is closer to its generating point than to any other. The individual cells resulting from this process are known as Dirichlet regions, Thiessen polytopes, or Voronoi polygons. The animation at the top of this page illustrates the structure of a Voronoi diagram for a set of randomly chosen points as the number of points is varied.

Voronoi diagrams were considered as early as 1644 by René Descartes and were used in 1850 by Lejeune Dirichlet in the investigation of positive quadratic forms. They were also studied by Georgy Voronoi in 1907, who extended the investigation of Voronoi diagrams to higher dimensions. Voronoi diagrams find widespread applications in areas such as computer graphics, epidemiology, geophysics, and meteorology. A particularly notable use of Voronoi diagrams was the analysis of the 1854 cholera epidemic in London, in which physician John Snow determined a strong correlation between death rates and living in proximity to a particular (and infected) water pump on Broad Street in Soho.

Voronoi diagrams can be computed in Mathematica using the command VoronoiDiagram, which returns a data structure corresponding to the Voronoi diagram of a given set of points, and can be displayed using DiagramPlot, which gives a graphical illustration of the Voronoi diagram (left figure above). Voronoi diagrams can be even more easily visualized in Mathematica using visualization functions such as ListDensityPlot and ListPlot3D (right two figures, respectively).

Graph entropy and universality classes

Charlie and Amita study the --

COMPUTER MAP --

The CRACKS growing away from the dots, some of them

intersecting and merging; overlaid by shaded zones of

different colors --

CHARLIE

See anything?

AMITA

Graph entropies and universality

classes... no news there.

CHARLIE

Maybe I'm wrong.

Graph entropy

A graph entropy H(G, P) is a rather technical, information-theoretical functional on graph G with probability distribution P on its vertex set. As discussed in the article "Graph Entropy: A Survey" by Gábor Simonyi, there are several equivalent definitions.

Graph entropies are so-named because they share characteristics with the standard concept of entropy from statistical mechanics and thermodynamics. Readers may be most familiar with entropy as a measure of the disorder of a system. The second law of thermodynamics states that the entropy of an isolated system that is not in equilibrium tends to increase over time and will approach a maximum value as it reaches equilibrium.

Informally, the entropy of a graph can be viewed as representing a physical system in which the nodes represent identical physical subsystems that can each be in some state. The directed edges in the graph indicate possible causal influences, and the dynamic behavior of the entire physical system is determined by functions assigned to the vertices which represent the underlying physical laws. As in physical systems subject to the laws of thermodynamics, the behavior of the functions is such that the overall entropy of the system is maximized. These concepts can be given a rigorous mathematical formulation; for details, see the recent paper by Søren Riis.

Dmitri Volchenkov and Philippe Blanchard have recently applied graph entropies to the study of potentially influential spaces in cities, a result apparently familiar to Amita. They found that while the connectivity entropy has a tendency to increase with the city size, the centrality entropy decreases, thus reflecting the global connectedness of cities.

Universality classes

In physics, a phase transition is the transformation from one thermodynamical state to another accompanied by an abrupt change in one or more physical properties. If a represents a physical property as a function of a parameter β and the parameter becomes critical at value βc, then the order parameter can be approximated by

where the exponent α is called a critical exponent of the system. Astonishingly, it is an empirical fact that the critical exponents in very different phenomena, such as superfluid transitions, quadratic mappings in mathematics, the formation of cracks in many materials (as possibly alluded to by Amita), and many others, end up being the same. Although the concept had existed decades earlier, the term "universality" was popularized by Leo Kadanoff in the late 1970s to describe this phenomenon, and systems sharing the same critical exponent are said to belong to the same universality class. Thus, many macroscopic phenomena may be grouped into a small set of universality classes, described by the set of relevant observables.

The Feigenbaum constant is a universal constant for functions approaching chaos via period doubling, as illustrated in the figure above. It was discovered by Mitchell Feigenbaum in 1975 while studying the fixed points of the iterated function

and characterizes the geometric approach of the bifurcation parameter to its limiting value as the parameter μ is increased for fixed x.

Universality is successfully explained by an advanced area of mathematics known as functional group renormalization theory.

Possibly apropos to Charlie and Amita's researches, Bin Jiang recently investigated the topological patterns of urban street networks using a large sample of 40 U.S. cities. This research found that all the topologies of urban street networks based on street-street intersection demonstrate a small world structure and have a scale-free property for both street length and connectivity degree. (Note that we previously encountered small world properties indirectly, through Charlie's work on the subject of Apollonian networks, in Episode 403, "Hollywood Homicide.") This research also found universality in the topological pattern of urban street networks for different cities, and even for different parts of a city of a reasonable size.

Cluster analysis

Charlie, Amita, and Larry a swirl of activity, as, on

THE MAP --

Almost an inversion of what we've previously seen; the

spiderwebbed "cracks" are faded, and the highlighted zones

are where no streets had been touched --

CHARLIE

How're we doing with the cluster

radius changes?

AMITA

Almost entered --

Cluster analysis is a technique used for classification of data in which data elements are partitioned into groups called clusters that represent collections of data elements that are proximate, based on a distance or dissimilarity function.

Cluster analysis is implemented in Mathematica as FindClusters[data] or FindClusters[data, n].

The NUMB3RS Season 1 pilot (2005) and Season 2 episode "Dark Matter" featured clusters and cluster analysis. In "Dark Matter," Charlie ran a cluster analysis to find connections between the students that seemed to be systematically singled out by the anomalous third shooter.

There are however various other sorts of specific clusters in mathematics, including s-clusters, b-clusters, and K-means clustering. It is therefore not entirely clear which sort of cluster radius Amita may be referring to.

References

Dirichlet, G. L. "Über die Reduktion der positiven quadratischen Formen mit drei unbestimmten ganzen Zahlen." J. reine angew. Math. 40, 209-227, 1850.

Feigenbaum, M. J. "Quantitative Universality for a Class of Non-Linear Transformations." J. Stat. Phys. 19, 25-52, 1978.

Floyd, R. W. "Algorithm 97." Comm. ACM 5-6, 345, 1962.

Jiang, B. "A Topological Pattern of Urban Street Networks: Universality and Peculiarity." Physica A, 384, 647-655, 2007.

Maris, H. J. and Kadanoff, L. P. "Teaching the Renormalization Group." American Journal of Physics 46, 652-657, 1978.

Pemmaraju, S. and Skiena, S. "All-Pairs Shortest Paths." § 8.1.2 in Computational Discrete Mathematics: Combinatorics and Graph Theory in Mathematica. Cambridge, England: Cambridge University Press, pp. 220-331, 2003.

Riis, S. "Graph Entropy, Network Coding and Guessing Games." arXiv preprint math.CO 0711.4175v1. 27 Nov 2007. [Abstract]

Roy, B. "Transitivité et connexité." C. R. Acad. Sci. Paris 249, 216-218, 1959.

Simonyi, G. "Graph Entropy: A Survey." In Combinatorial Optimization (Ed. W. Cook, L. Lovász, and P. Seymour). Providence, RI: Amer. Math. Soc., pp. 399-441, 1995. [PostScript].

Snow, J. On the Mode of Communication of Cholera. London: John Churchill, 1855. http://www.ph.ucla.edu/epi/snow/snowbook.html.

Volchenkov, D. and Blanchard, P. "Discovering Important Nodes through Graph Entropy Encoded in Urban Space Syntax." arXiv preprint physics.soc-ph 0709.4415. 27 Sep 2007. [Abstract]

Voronoi, G. "Nouvelles applications des paramètres continus à la théorie des formes quadratiques." J. reine angew. Math. 133, 97-178, 1907.

Warshall, S. "A Theorem on Boolean Matrices." J. ACM 9, 11-12, 1962.